1

Medium

Fine-Tuning Meta's LLaMA 2 with QLoRA

Introduction

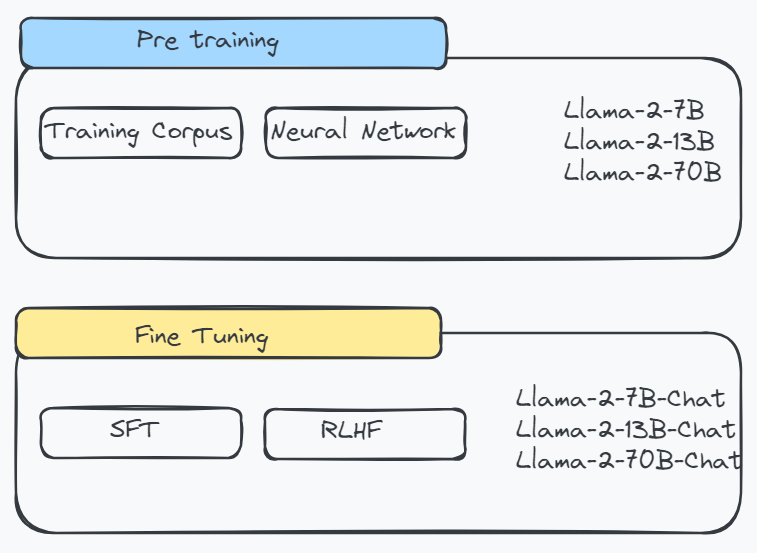

In this tutorial, we will guide you through fine-tuning Meta's LLaMA 2 using a method called QLoRA (Quantization and LoRA). LLaMA 2 is Meta's second-generation open-source LLM (Large Language Model) collection, optimized for various tasks. Fine-tuning allows you to train LLaMA 2 on your specific dataset to improve its performance on specific tasks.

Key Concepts

* **LLaMA 2:** Meta's open-source LLM collection * **QLoRA:** A fine-tuning method combining quantization and LoRA * **Fine-tuning:** Training a pre-trained model on a specific dataset

Steps for Fine-Tuning

1. **Gather your dataset:** Prepare your proprietary dataset that is relevant to the task you want to improve. 2. **Select a QLoRA model:** Choose a pre-trained QLoRA model that is suitable for your task. 3. **Fine-tune the model:** Use the QLoRA fine-tuning technique to train the model on your dataset. 4. **Evaluate the performance:** Assess the fine-tuned model's performance on your task and make adjustments as needed.

Benefits of Fine-Tuning

* Improved performance on specific tasks * Customization for your dataset * Time savings compared to training a model from scratch

Conclusion

Fine-tuning Meta's LLaMA 2 using QLoRA can significantly improve the model's performance on your specific tasks. By following the steps outlined in this tutorial, you can leverage the power of LLaMA 2 and QLoRA to create tailored solutions for your NLP needs.

Comments